In today’s AI-driven world, thermal management isn’t just a technical detail. It’s the unsung hero keeping high-performance hardware running at peak efficiency without melting under pressure. As AI workloads explode with massive GPU clusters and data centre racks pushing power densities beyond 100 kW, traditional air cooling simply can’t keep up. Innovations like direct-to-chip liquid cooling, immersion systems, and AI-optimized predictive controls are transforming how we handle heat in AI hardware, slashing energy use while boosting reliability. At Panasia Solutions, with over 25 years of expertise in electronics design, engineering, and OEM/ODM manufacturing, we partner with clients to integrate these cutting-edge thermal management solutions from concept through mass production – helping turn visionary AI hardware into scalable, market-ready products.

Whether you’re designing next-gen AI accelerators or scaling data center infrastructure, effective thermal management delivers consistent performance. It lowers operating costs and helps meet sustainability goals. Let’s dive into why this matters, explore the latest innovations, and see how forward-thinking manufacturers like Panasia Solutions enable real-world success.

Why Thermal Management Matters More Than Ever for AI Hardware

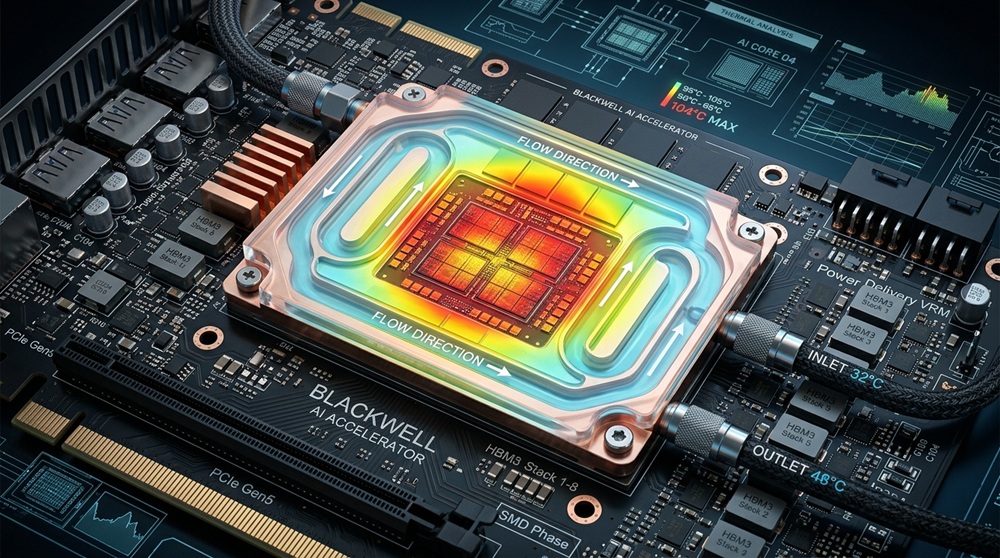

AI hardware generates unprecedented heat. Modern GPUs, such as NVIDIA’s Blackwell B200, feature configurable thermal design power (TDP) ratings that climb to 1,000–1,200 watts per chip. Full racks easily exceed 100 kW in dense configurations. This isn’t just a minor inconvenience. Poor thermal management leads to throttling, reduced lifespan, higher failure rates, and skyrocketing energy bills. In fact, cooling can account for up to 40% of a data center’s total power consumption. It becomes a critical factor in both performance and profitability.

The numbers tell a compelling story. Global data center energy demand is surging. AI workloads are projected to drive significant increases in electricity use. According to Precedence Research’s analysis of the thermal management for data centers market, the sector is expected to grow from USD 15.01 billion in 2025 to USD 33.72 billion by 2034. This represents a CAGR of 9.41%. The growth is driven by the need for energy-efficient solutions to handle rising heat from AI and high-performance computing. Liquid cooling solutions are gaining traction. They transfer heat far more efficiently than air. This shift isn’t optional for hyperscale operators tackling generative AI training and inference. It is essential for staying competitive while controlling costs and carbon footprints.

Panasia Solutions understands these pressures firsthand through our work in high-performance electronics. Our engineering teams specialize in design-for-manufacturability (DFM). This approach factors thermal management early in the development cycle. It ensures AI hardware prototypes scale seamlessly to production volumes without thermal surprises.

The Core Challenges in AI Hardware Thermal Management

AI hardware faces unique thermal hurdles that traditional designs never encountered:

- Exploding Power Densities: Single AI servers now pack multiple high-TDP GPUs, creating localized hotspots that air cooling struggles to dissipate evenly.

- Energy and Sustainability Pressures: Cooling systems consume massive resources – up to 40% of a facility’s electricity – while water usage in evaporative systems raises environmental concerns.

- Scalability and Reliability: As racks push toward 140 kW and beyond, downtime from overheating becomes unacceptable for always-on AI applications.

- Miniaturization Conflicts: In edge AI devices and compact servers, space constraints amplify heat buildup, demanding innovative integration of cooling components.

These challenges explain why the thermal management market for data centres is expanding rapidly. As highlighted in Technavio’s liquid cooling for AI data centers market report, the segment is set to increase by USD 1.88 billion from 2024 to 2029 at a CAGR of 31.4%, fuelled by escalating TDP and compute density of AI accelerators that surpass traditional air cooling limits.

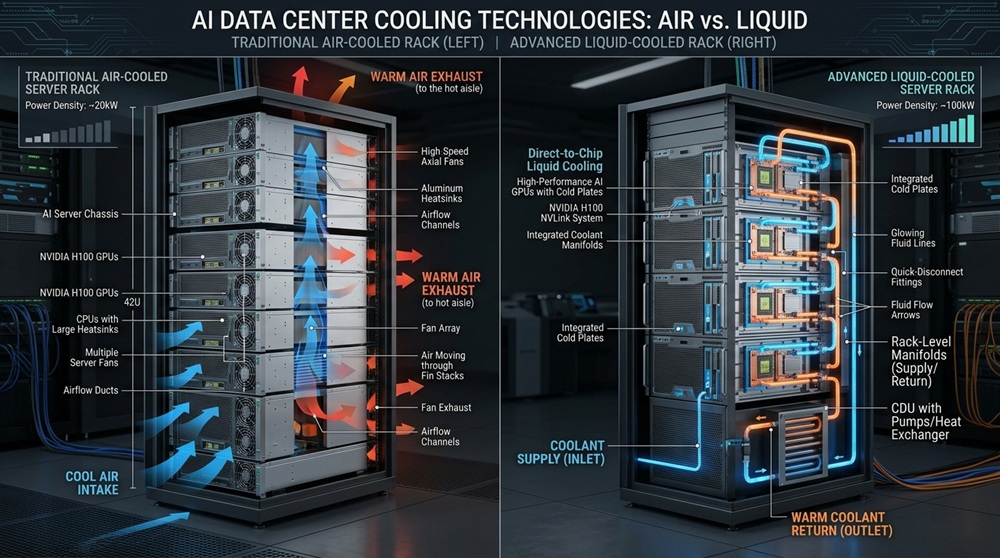

Traditional Air Cooling vs. Next-Generation Innovations

Air cooling – fans, heat sinks, and CRAC units – served the industry well for decades. But with AI hardware’s thermal loads, it hits hard limits around 20–30 kW per rack. Beyond that, you need massive airflow volumes, higher fan power (increasing noise and energy use), and risk of hotspots.

Enter the innovations redefining thermal management for AI hardware:

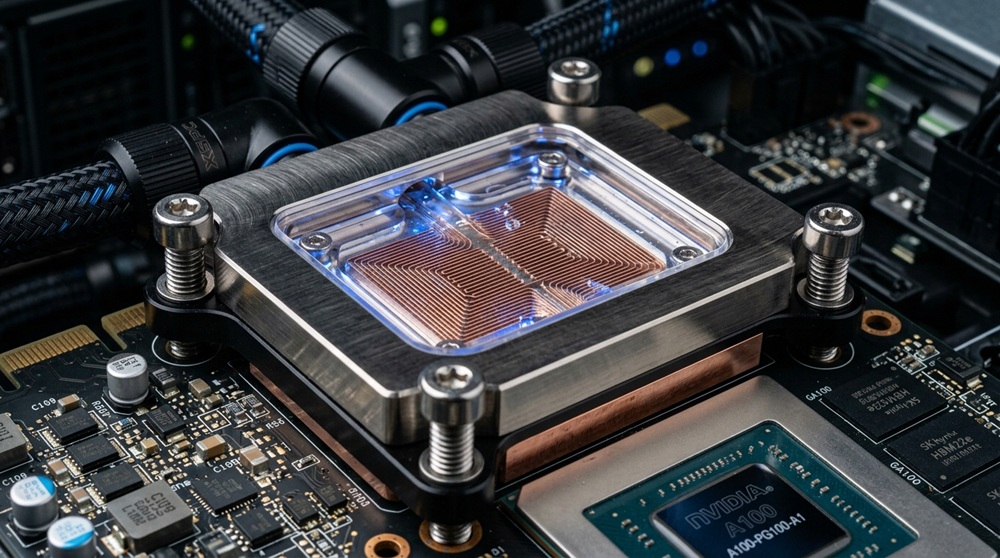

- Direct-to-Chip (D2C) Liquid Cooling: Cold plates sit directly on CPUs and GPUs, circulating chilled fluid to capture heat at the source. This approach achieves high heat capture rates and supports rack densities far beyond air limits.

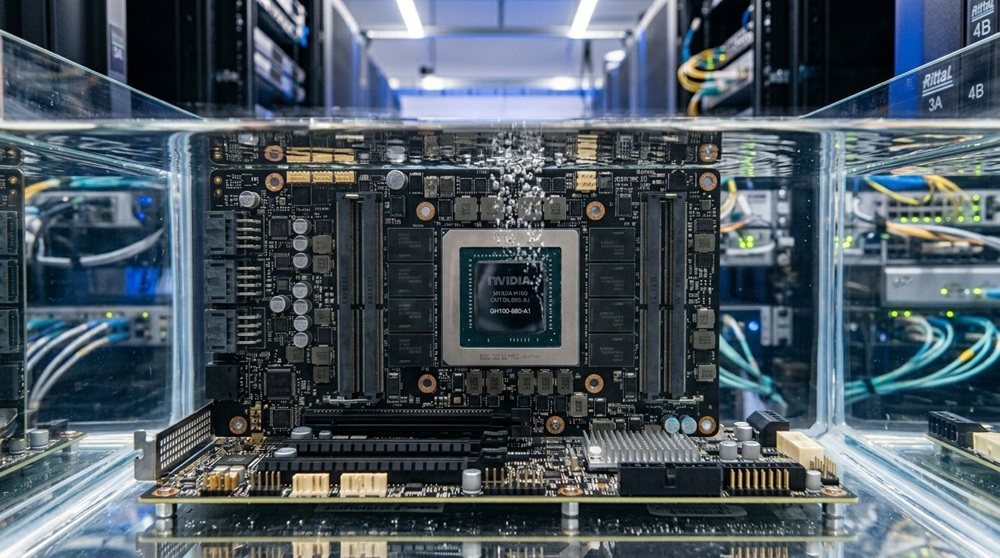

- Immersion Cooling: Servers are submerged in dielectric fluids (single- or two-phase), eliminating air gaps and enabling uniform cooling even in ultra-dense setups.

- Hybrid and Rear-Door Heat Exchangers: Combine air and liquid for retrofits, capturing exhaust heat efficiently without full infrastructure overhauls.

- Advanced Thermal Interface Materials (TIMs): Graphene-enhanced compounds, liquid metal TIMs, and phase-change materials dramatically improve heat transfer between chips and coolers.

These solutions aren’t futuristic – they’re deploying now in leading AI facilities, delivering significant reductions in cooling energy and enabling higher compute densities. IDTechEx’s Thermal Management for Data Centers 2025-2035 report forecasts that the annual market for direct-to-chip cooling alone will exceed US$50 billion by 2035, with AI applications driving the strongest growth due to extreme power densities.

Breakthrough Innovations Shaping the Future of Thermal Management

The pace of thermal management innovation is accelerating to match AI demands. Here’s a closer look at game-changing technologies:

- Direct-to-Chip Liquid Cooling Systems. D2C remains the fastest-growing segment, with cold plates engineered for heat fluxes exceeding 200–300 W/cm². Companies are integrating microfluidic channels and quick-disconnect manifolds for easier maintenance. For AI hardware, this means sustaining 1,200W+ GPUs at safe temperatures while cutting PUE closer to the ideal 1.0.

- Immersion and Two-Phase Cooling. Single-phase immersion uses non-conductive fluids that stay liquid; two-phase leverages boiling for even higher efficiency. These systems excel in modular data centers and edge deployments, where space and water conservation matter. Early adopters report substantial energy savings compared to air cooling.

- AI-Driven Predictive Thermal Controls. Smart sensors, machine learning algorithms, and real-time workload monitoring dynamically adjust coolant flow, fan speeds, and pump operations. This “AI cooling AI” approach prevents over-cooling and optimizes for actual demand – potentially slashing cooling costs by 30% or more.

- Advanced Materials and Sustainable Designs. From high-conductivity TIMs to eco-friendly coolants and recyclable enclosures, material science is key. Photonics integration (light-based signalling) also reduces heat by minimizing electrical resistance in interconnects – a area where Panasia Solutions brings proven expertise in high-speed AI product development.

At Panasia Solutions, our manufacturing capabilities shine here. We help clients prototype and scale these innovations using SMT, CNC machining, custom molds, and rigorous thermal validation testing. Our end-to-end process – from DFM reviews that optimize for thermal management to certified mass production – ensures your AI hardware meets global standards without delays.

How Panasia Solutions Delivers Thermal Management Leadership in AI Hardware

With roots tracing back over 25 years and a global footprint spanning Asia, North America, and beyond, Panasia Solutions isn’t just a manufacturer – we’re your strategic engineering partner for AI hardware success. Our teams excel in PCBA design, miniaturization (critical for managing heat in compact AI edge devices), high-speed interconnects that reduce power-related heat, and full-system integration of cooling components.

Clients rely on us to:

- Prototype thermal-optimized enclosures and cold-plate assemblies

- Scale production of AI server modules with integrated liquid cooling channels

- Ensure IP-protected, ISO-compliant manufacturing that accelerates time-to-market

- Incorporate sustainable practices like circular economy design for recyclable cooling hardware

By embedding thermal management considerations from day one, we help clients avoid costly redesigns and deliver hardware that’s efficient, reliable, and ready for the AI era.

Real-World Impact and Industry Outlook

Leading hyperscalers and AI innovators are already reaping benefits. Liquid cooling adoption has surged, with projections showing continued strong growth through the next decade. Future trends point to even higher integration: embedded microchannel cooling, superfluid immersion, and fully AI-orchestrated systems that self-optimize across entire facilities.

The bottom line? Investing in advanced thermal management today future-proofs your AI hardware against escalating demands while delivering measurable ROI through energy savings and performance gains.

Ready to Cool Your AI Ambitions?

Thermal management innovations are no longer optional – they’re the foundation for sustainable, high-performance AI hardware. At Panasia Solutions, we’re proud to empower our partners with the design, engineering, and manufacturing expertise needed to implement these solutions at scale.

At Panasia Solutions, we’re committed to helping you navigate this landscape. Browse our services or contact our team today to discuss how we can bring your vision to life.