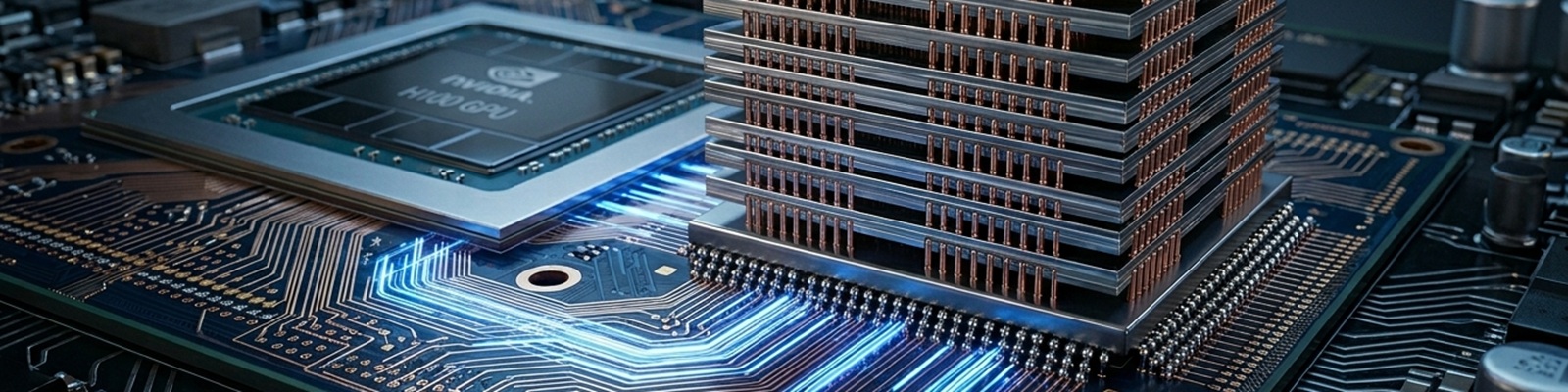

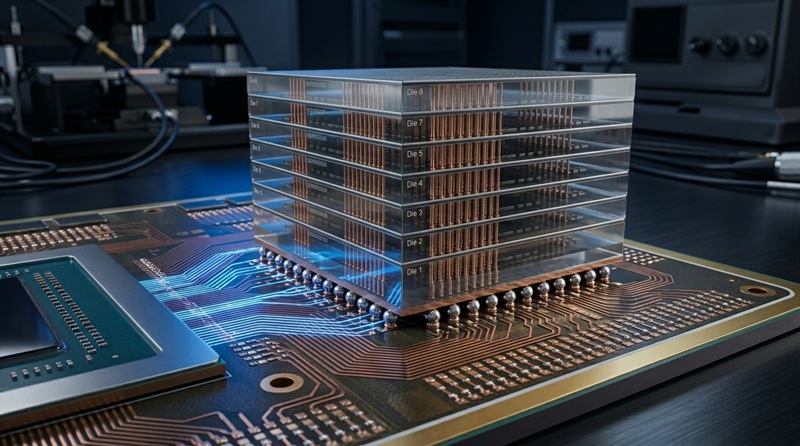

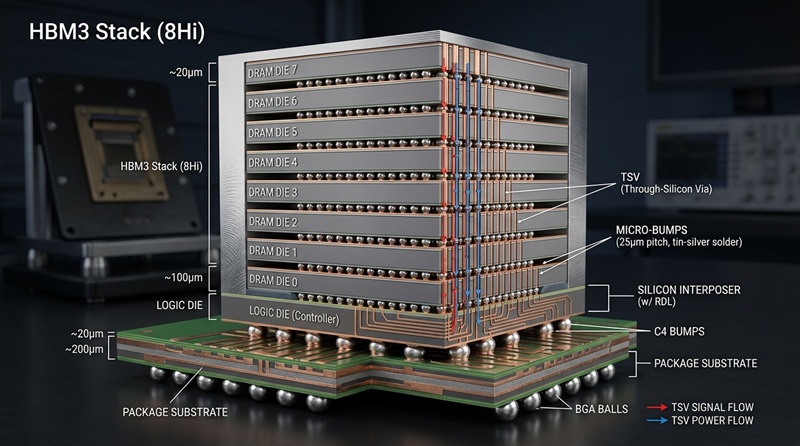

HBM AI applications are at the heart of today’s artificial intelligence hardware revolution. High-Bandwidth Memory (HBM) delivers the massive data throughput and capacity required by AI accelerators, GPUs, and custom ASICs to train and run large language models, generative AI, and real-time inference workloads efficiently. Unlike traditional memory, HBM stacks multiple DRAM dies vertically using Through-Silicon Vias (TSVs), providing terabytes-per-second bandwidth in a compact footprint while improving power efficiency.

Panasia Solutions helps manufacturers successfully integrate HBM into AI devices. With over 25 years of expertise in high-tech consumer electronics, advanced semiconductor packaging, and precision manufacturing from our Shenzhen headquarters, we offer end-to-end support – from design-for-manufacturability (DFM) optimized for HBM to high-yield assembly and scalable production of complex AI hardware.

The Explosive Growth of HBM AI Applications

Demand for HBM AI applications has surged with the rise of generative AI and high-performance computing. The global High-Bandwidth Memory market was valued at around USD 3.17–9.50 billion in 2025 and is projected to reach USD 12.44–69.75 billion by 2031–2035, growing at CAGRs of 25–31% across major forecasts. AI and machine learning segments dominate, often accounting for 55–78% of total HBM consumption, driven primarily by training and inference needs of large models.

This growth is fuelled by hyperscalers and AI chip designers adopting HBM3E and preparing for HBM4. Supply remains constrained as vendors like SK hynix, Samsung, and Micron ramp up production, yet demand continues to outpace capacity.

What Makes HBM Ideal for AI Devices?

HBM’s 3D-stacked architecture with ultra-wide interfaces gives it clear advantages in HBM AI applications:

- Exceptional Bandwidth. Up to 2+ TB/s per stack, enabling faster data movement for parallel AI computations.

- Improved Power Efficiency. Shorter interconnect distances reduce energy consumption per bit transferred.

- Compact Footprint. Critical for co-packaging with logic dies on silicon interposers in 2.5D and emerging 3D configurations.

- High Capacity. Multi-die stacks (8–16+ layers) provide the large memory pools AI models require without excessive board space.

These features help break the “memory wall” that traditionally limits AI performance.

Key HBM AI Applications

HBM AI applications power a wide range of systems today and will expand further:

- AI Training Accelerators. High-end GPUs and custom chips use multiple HBM stacks to handle massive parallel matrix operations for model training.

- Inference Engines. Real-time applications in autonomous vehicles, robotics, recommendation systems, and edge servers benefit from low-latency, high-bandwidth memory access.

- High-Performance Computing (HPC). Scientific simulations, climate modelling, and data analytics leverage HBM’s speed and capacity.

- Edge AI Devices. Compact modules for industrial cameras, drones, smart infrastructure, and on-device generative AI where power and size are constrained.

- Next-Generation Architectures. Hybrid bonding and advanced packaging will enable even denser integration for future AI hardware.

These uses make HBM essential for turning raw compute into practical, scalable intelligence.

Major Challenges in HBM Integration and Manufacturing

While powerful, HBM AI applications present notable manufacturing and design challenges:

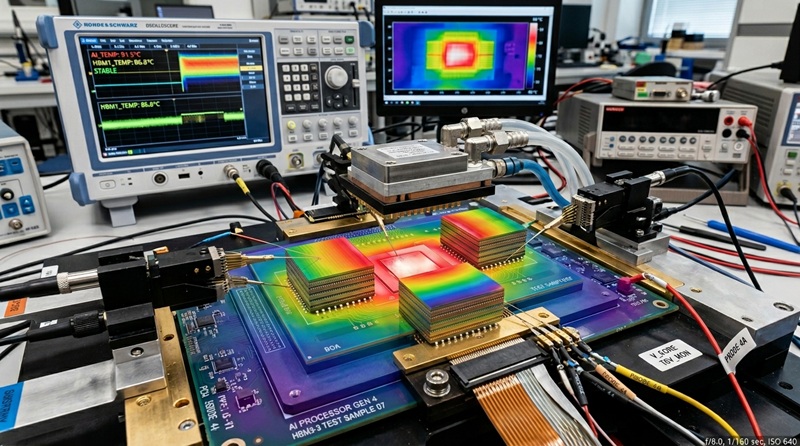

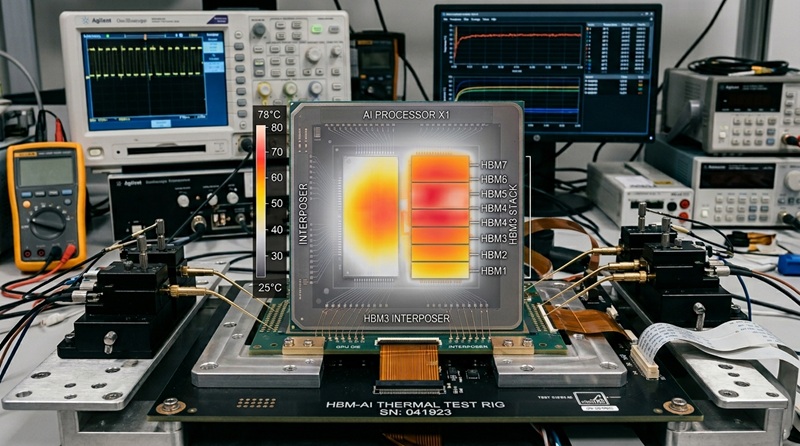

- Thermal Management. High power density in stacked dies creates hotspots; advanced cooling solutions like microchannels or improved thermal interface materials are often required.

- Complex Packaging. Precise 2.5D interposer assembly, alignment, and hybrid bonding increase complexity and cost.

- Yield and Cost Issues. 3D stacking and thin-wafer processing can lower yields, with HBM sometimes representing over 50% of an AI accelerator’s total cost.

- Supply Chain Constraints. Limited suppliers and production capacity lead to ongoing shortages.

- Signal Integrity and Power Delivery. Maintaining stability across high-speed stacks in dense packages.

- Scalability for Volume Production. Balancing cutting-edge performance with reliable, cost-effective manufacturing at scale.

Addressing these demands specialized expertise in advanced packaging and precision assembly.

How Panasia Solutions Leads in HBM AI Applications Manufacturing

Panasia Solutions is a reliable partner for companies developing HBM AI applications. Our 25+ years of experience in high-precision electronics include advanced packaging, interposer handling, thermal solutions, and ISO-compliant mass production for complex semiconductor devices.

We support clients with:

- DFM feedback optimized specifically for HBM integration, interposers, and thermal performance.

- Precision PCBA, cleanroom assembly, and testing for 2.5D/3D packages.

- Rigorous validation of signal integrity, power delivery, and long-term reliability.

- Global supply chain management to mitigate component shortages.

- Scalable production from prototypes to high volumes while protecting intellectual property.

Our Shenzhen-based capabilities help transform challenging HBM designs into reliable, high-performing AI hardware ready for market.

The Future of HBM in AI Devices

HBM4 and future generations will feature higher stacks, hybrid bonding for better thermal and electrical performance, and tighter integration with co-packaged optics. As AI models continue growing, HBM will remain critical for overcoming memory bottlenecks. Innovations in cooling, materials science, and manufacturing processes will extend HBM AI applications from data centres into more intelligent edge devices.

Panasia Solutions is actively investing in these next-generation capabilities to keep our partners at the forefront of AI hardware development.

Ready to overcome the complexities of HBM AI applications and bring powerful AI devices to market? Browse our capabilities or contact our expert team today for a consultation. Let’s build the high-performance hardware powering the AI future.